The Ultimate Guide to AI Ethics & Governance

A curated UK edition of TechDay news, analysis, interviews, reviews, job moves, and related resources for AI Ethics & Governance.

What to know about AI Ethics & Governance

AI Ethics & Governance concerns the responsible development, deployment, and oversight of artificial intelligence technologies to ensure they align with societal values, protect individual rights, and promote transparency. As AI systems become increasingly integrated into various sectors—from healthcare and business to creative industries and public services—it is crucial to address challenges such as data privacy, bias, security vulnerabilities, and ethical accountability.

This tag brings you insightful stories on current efforts to establish ethical standards, government policies, and corporate frameworks that guide AI use responsibly. You'll find discussions on safeguarding data privacy, mitigating environmental impacts, empowering diversity and inclusion, and tackling emerging risks like misinformation and cyber threats. The featured content also covers collaborative projects between academia, industry, and regulators aiming to enhance AI governance globally.

Whether you're a professional, policymaker, or simply interested in how AI can benefit society without compromising ethics, exploring these stories will provide a comprehensive understanding of the ongoing work and critical questions shaping the future of AI. Click through to learn about innovations, challenges, and strategies that ensure AI technologies contribute positively and equitably to our world.

UK AI Ethics & Governance News

Regional stories with direct local relevance

UK public sector races ahead with AI as trust lags

Public confidence is trailing adoption, with nearly half of citizens uneasy about AI in services despite rapid uptake by public bodies.

OneAdvanced adds AI tools to IQ for regulated sectors

Customers in regulated sectors can now access AI workflow and compliance tools as OneAdvanced expands its IQ platform across six markets.

Cato Networks opens AI hub in London for R&D growth

The new Holborn site will add engineering jobs as demand rises for secure AI tools among businesses and the company seeks deeper UK roots.

Lumera warns pension trustees on AI governance controls

Pension schemes face tighter scrutiny as reform-driven data growth makes AI oversight, accountability and human intervention more urgent.

Gravitee launches Gamma as UK AI agents top 713,130

British firms now use 713,130 AI agents, sharpening pressure for tighter oversight as Gravitee rolls out Gamma to govern them.

Parloa opens London office as AI expansion gathers pace

London's rising AI investment is drawing Parloa into the capital as the company expands its European footprint and customer base.

Analyst Insights

Research and market analysis connected to AI Ethics & Governance

Parloa opens London office as AI expansion gathers pace

Sentra launches AI data readiness platform for enterprises

Salt Code enforces security policies in AI coding tools

Claroty launches AI security agent for critical systems

UK firms pour into AI despite weak returns, study finds

Featured News

Snowflake unveils platform upgrades for CoCo, CoWork

Enterprises will get tighter AI controls as Snowflake adds blocking policies, multi-party authorisation and new agentic tools at Summit.

Exclusive: Reco COO on securing the AI inside your SaaS stack

Reco COO Zoe Hillenmeyer says enterprises typically underestimate their AI agent exposure by a factor of ten and that gap is widening.

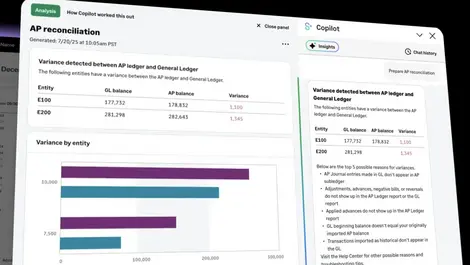

Sage Intacct builds explainable AI into accounting

Accountants facing staff shortages may gain faster workflows, as Sage Intacct’s new agent exposes its calculations, sources and audit trail.

Sage branding itself on trust in AI world of 'big voices'

Trust is emerging as a selling point for finance software as Sage warns that opaque AI can leave CFOs answerable for costly errors.

Exclusive: Denodo's Dominic Sartorio on perfect data vs the right data

Trust in enterprise AI is being undermined as Denodo research finds most firms still lack live, context-aware data for production use.

Google Cloud CEO sets out enterprise AI agent plan

Enterprises will get one place to build, govern and run AI agents, as Google Cloud expands Gemini Enterprise across models, data and security.

Exclusive: Google Cloud reshaping finance with agentic AI

Banks must move beyond isolated pilots if they want agentic AI to deliver enterprise-wide gains, Google Cloud says.

Google sees retail success with agentic commerce push

Retailers are using Google’s new AI suite to speed up shopping and support, with Bunnings already live and UCP adoption starting to grow.

Exclusive: Grafana Lab's Jen Villa on targeting the AI observability gap

The new tools aim to help firms spot faulty AI outputs and data risks sooner as production deployments outpace monitoring methods.

Exclusive: Celonis global banking head says AI rollout hinges on process intelligence

Banks risk wasting AI spending unless they first map how work really flows, as Celonis says process intelligence is becoming phase zero.

TrendAI: Evolving the cybersecurity value proposition

New research shows two-thirds of Australian business and IT leaders feel pressured to approve AI projects while overlooking security risks.

Diligence the watchword as oversight lags AI governance

Most boards are using AI for routine tasks, but only 3% have woven it into risk oversight, leaving organisations exposed to fresh hazards.

UiPath Accelerates AI in Software Development and Testing

UiPath is pushing AI deeper into software testing, promising autonomous agents that transform quality assurance and developers' roles.

Cloudera and Svitla Systems bring trusted AI to healthcare

Cloudera and Svitla team up to build trusted, clinician‑centric AI that unifies patient data while safeguarding privacy and consent.

Rise of AI Agents introduces new infosec risk: Okta

Okta warns that surging numbers of uncontrolled AI agents pose a major identity and access risk as they become the new digital workforce.

Elastic says AI search & context now decide customer loyalty

Elastic argues that in an AI-obsessed market, robust search across messy data is the real foundation of trustworthy, profitable experiences.

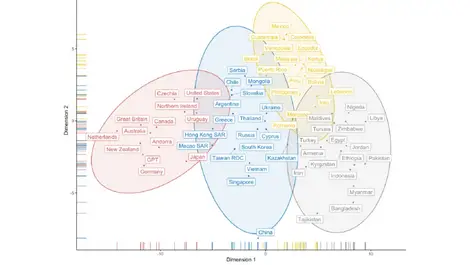

AI may sound human...but whose values does it reflect?

Harvard research finds “human-like” AI mirrors Western liberal values, raising concerns over cultural bias as it spreads worldwide.

Expert Columns

It's time insurance seeks out next gen responsible technologies

Why so many enterprise AI programmes fail before they scale

Why data governance is a core IT responsibility in the AI era

Unlocking science: building AI researchers can trust

Why the next phase of AI in business will be about workflow control, not just generation

From AI Adoption to AI Advantage

Why trust is the bottleneck for AI-driven operations

Why workplace AI is creating a quiet legal risk most businesses haven't caught up with yet

Is your data ready for AI? 5 steps before deploying an agent

Turning practical AI use cases into measurable impact

Interviews

Interviews and video coverage from the networkRecent AI Ethics & Governance News

UK firms lag on AI cyber defences, Wavestone warns

Despite rising cyber maturity, most large organisations still lack basic protections against AI-specific attacks such as prompt injection, Wavestone says.

Financial leaders fear AI could harm vulnerable customers

Most firms have expanded customer-facing AI even as a survey found 77% fear their strategies could harm vulnerable customers.

It's time insurance seeks out next gen responsible technologies

Trust is now a commercial issue for insurers, as Consumer Duty and wary customers push them towards transparent AI and fairer claims handling.

Lucid adds LeanIX, Ardoq links for enterprise AI rollouts

Enterprises may struggle to scale AI without clearer records of systems and processes, Lucid said, as it adds LeanIX and Ardoq links.

Why so many enterprise AI programmes fail before they scale

Pressure to show returns is exposing weak data, governance and skills, leaving many pilot projects stuck before they reach production.

UK firms pour into AI despite weak returns, study finds

Weak networks and poor data are leaving most UK AI projects short of returns, as firms keep ramping up spending to avoid falling behind.

Bournemouth University picks ICS.AI for AI rollout

Staff at Bournemouth University will get governed AI tools first, as the institution maps use cases and sets a roadmap for wider adoption.

UK firms boost cyber & AI spending, Barclays survey

Confidence in defence remains patchy as 68 per cent of UK business leaders plan higher cyber spending and 46 per cent fear new tools widen threats.

Provenance appoints AI advisory board for retail shift

Retailers risk being overlooked by AI shoppers unless product data is verified and structured for machine-led search and recommendations.

iMeta extends West Midlands data boot camp contract

More learners in the West Midlands will get funded data training as iMeta's boot camp extension targets shortages in digital and AI skills.

UK workers widely use unapproved tools, Mitel warns

Most UK staff are using unauthorised chat and AI apps at work, raising fears of data leaks, compliance breaches and lost oversight.

The Mythos moment: Why 'unknown exposure' is becoming the biggest cyber risk of 2026

Security teams face a shrinking window to spot and fix flaws as AI models like Mythos find exposures in minutes, not days.

ΑΙ FOMO is changing the game for digital marketing professionals

Burnout is rising as marketers race to master AI, while more than 70% of teams now work beyond sustainable capacity.

Isle of Man launches first data asset law framework

The new regime could help firms record and trade governed datasets as assets, as Isle of Man officials move to implement the register.

Unlocking science: building AI researchers can trust

Researchers risk wasting time on untrustworthy generic tools unless AI is built for rigorous, traceable science and human scrutiny.

Why the next phase of AI in business will be about workflow control, not just generation

Businesses now need AI that fits into managed processes, as speed alone can create fragmentation and weaken oversight across customer-facing work.

UK firms fear supplier AI cyber risks, QBE finds

Most UK businesses using AI are not checking suppliers' systems, even as cyber incidents and revenue losses linked to third parties rise.

Facewatch appoints Dean Armstrong KC as Data Chief

The hire comes as live facial recognition in British shops faces mounting scrutiny over privacy, accountability and safeguards for shoppers and staff.

UK consumers want human checks in insurance AI use

Human oversight remains a red line for many policyholders, with only 30% of UK consumers happy for insurers to use AI on pricing decisions.

UK senior leaders more likely to use Shadow AI tools

TrustedTech said 62% of UK senior leaders use unauthorised AI tools at work, intensifying worries over data leaks and policy breaches.